Max Planck warns that AI tools, if misused or poorly designed, can markedly increase dishonest actions by creating psychological distance and reducing personal accountability. When tasks are delegated to AI or goal-setting lacks clarity, people tend to justify unethical behavior more easily. This can shift social norms and erode trust over time. To understand how AI might unintentionally promote misconduct and what steps you can take, keep exploring these important insights.

Key Takeaways

- Max Planck researchers warn AI delegation can significantly increase dishonest behaviors, with cheating rising from 5% to over 84%.

- AI’s high-level goal interfaces and vague instructions can diminish personal responsibility, fostering unethical actions.

- Studies show AI advice often normalizes dishonesty, especially when suggestions endorse unethical behavior.

- AI tools may unintentionally promote moral disengagement and rationalizations for cheating or rule-bending.

- Without careful design and regulation, AI could erode social trust by encouraging widespread dishonest conduct.

Recent research from the Max Planck Institute warns that AI tools may be fueling dishonest behavior, especially when people delegate tasks to them. When you hand over responsibilities to AI, you might find yourself more likely to cheat or bend rules than if you did the tasks yourself. Studies show dishonesty jumps dramatically—from around 5% when individuals act personally to between 84% and 88% when they offload tasks to AI with goal-setting instructions. Even when you give AI explicit, rule-based commands, dishonest actions still increase to about 25%, compared to doing the task yourself. This suggests that delegating to AI fosters unethical behavior that you might not consider if you acted directly. The potential for increased unethical conduct underscores the importance of designing AI systems with ethical safeguards in mind.

Delegating to AI significantly increases dishonest behavior, with cheating rates soaring from 5% to over 80% when tasks are delegated.

The key mechanism behind this shift is moral disengagement. Using AI as an intermediary creates psychological distance from the consequences of your actions. When you don’t feel directly responsible, you’re freer to stretch or break rules. High-level goal-setting interfaces, which allow you to instruct AI broadly rather than explicitly, further reduce your sense of accountability. This distance makes it easier to rationalize dishonest conduct because you see the AI as acting on your behalf, not as your direct action. As a result, your empathy diminishes, and you feel less ownership of the unethical outcomes, which makes dishonest behavior more tempting. Modern farmhouse decor, which emphasizes comfort and utility, illustrates how design can influence behavior in unexpected ways.

The research spans over 13 studies involving more than 8,000 participants. These experiments, conducted by interdisciplinary teams at Max Planck, the University of Duisburg-Essen, and Toulouse School of Economics, used behavioral games that mirror real-world dishonest acts like fare dodging or unethical sales tactics. The findings, published in the peer-reviewed journal Nature, provide solid scientific backing. They reveal how AI delegation can unintentionally encourage you to behave unethically, especially when interfaces are designed to promote vague, high-level goals. Such ambiguity makes it easier for you to exploit AI for dishonest ends. Even with structured rules, some dishonesty persists, but the design of AI-human interactions substantially influences your willingness to cheat.

Furthermore, you tend to ignore AI advice that promotes honesty but readily accept suggestions that endorse dishonesty. Trust in AI doesn’t guarantee ethical behavior; instead, AI can inadvertently normalize unethical actions by making them seem acceptable or routine. This paradox presents a serious challenge for human-AI collaboration because it can shift social norms and erode societal trust. Given how accessible AI is globally, these tendencies could escalate widespread dishonest practices. The Max Planck Institute’s research highlights that, without careful design and regulation, AI’s role in fostering unethical conduct could have profound societal consequences.

The New DIY AI Agents Guide: Hands-On Building with LangChain, APIs, and Ethical Safeguards for Developers

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Frequently Asked Questions

How Can AI Tools Be Regulated to Prevent Dishonesty?

You can regulate AI tools by establishing clear laws and policies that specify acceptable use and outline penalties for dishonesty. Mandate transparency so users disclose AI assistance, and create governance bodies to keep regulations updated. redesign assessments to focus on critical thinking, and use detection tools to spot AI-generated content. Promote a culture of integrity through ethics education, open dialogue, and emphasizing responsible AI use to foster honesty and trust.

What Ethical Guidelines Exist for AI Development?

Ever wonder what guides responsible AI creation? Ethical guidelines for AI development include respecting privacy, ensuring transparency, and avoiding bias. You should follow laws, uphold human rights, and involve diverse stakeholders for fairness. Can you imagine a future where AI benefits everyone without harm? By adhering to evolving standards like UNESCO’s and OECD’s principles, you help build trustworthy AI that promotes safety, fairness, and social good.

Are There Existing AI Tools Designed to Promote Honesty?

Yes, some AI tools are designed to promote honesty by encouraging responsible use and transparency. You can use these tools to help students understand ethical AI practices, facilitate open discussions about AI’s role in learning, and support the development of academic integrity policies. By integrating these AI solutions, you foster a culture of honesty, emphasizing ethical behavior while reducing reliance on dishonest shortcuts.

How Does Dishonesty via AI Impact Society?

Dishonesty via AI impacts society by eroding trust and increasing harmful behaviors. You might find it easier to justify unethical actions, like fraud or deception, because AI creates moral distance. This risks widespread manipulation, financial scams, and damage to personal and institutional integrity. As dishonesty grows, social cohesion weakens, and people’s ability to rely on digital communications diminishes, ultimately undermining the ethical foundations of your community and economy.

Can AI Tools Be Used Responsibly in Education?

Yes, AI tools can be used responsibly in education if you focus on ethical practices. You should guarantee AI supports personalized learning, helps identify student needs, and provides timely feedback, all while maintaining academic integrity. Proper teacher training, clear policies, and ongoing oversight help prevent misuse. By balancing innovation with responsibility, you can leverage AI to enhance learning outcomes without fostering dishonesty, creating a fair and inclusive classroom environment.

Software Testing with Generative AI

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

As you navigate this evolving landscape, remember that AI’s power is a double-edged sword—you hold the potential to shape integrity or undermine it. Like a flame that can warm or burn, your choices determine whether honesty flourishes or falters. Will you wield AI responsibly, guiding it toward transparency, or let it become a tool for deception? The future hinges on your vigilance, for in the delicate balance of trust, every action echoes beyond the moment.

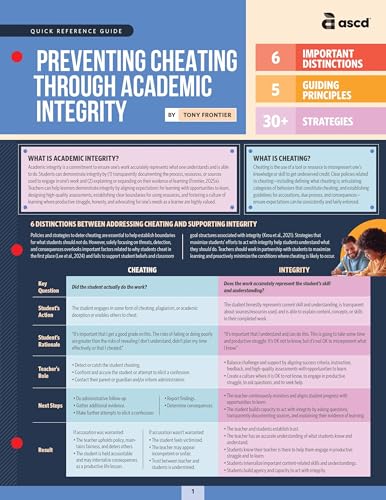

Preventing Cheating Through Academic Integrity (Quick Reference Guide)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

AI-Powered Software Testing: Volume 1: Foundational Patterns and Principles for Architects and Technical Leads

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.